NLP vs. LLMs: How Modern AI Chatbots Really Work

NLP vs. LLMs is not an either-or choice. Modern AI chatbots use natural language processing (NLP) to classify intent, extract entities, and route tickets, and large language models (LLMs) to generate grounded answers on top of that structure. The two are complementary layers in a single pipeline, not competing technologies. IrisAgent’s production chatbot runs exactly this architecture and holds validated accuracy above 95% while resolving 50%+ of support tickets without a human touching them.

This guide is for support leaders and engineers who keep hearing “we replaced NLP with an LLM” and want to know whether that is actually true, what each layer does in a real chatbot, and what goes wrong when teams skip one of them.

What Is NLP?

Natural language processing is the field of AI that teaches machines to read, parse, and make structured sense of human language. It goes back to the 1950s and covers a stack of well-defined tasks: tokenization, part-of-speech tagging, named entity recognition, intent classification, sentiment analysis, and machine translation.

The output of a classic NLP model is almost always a label or a structured object. Feed it “Can you refund my last two orders?” and it returns something like {intent: refund_request, entities: {order_count: 2, timeframe: last}}. That output is cheap to compute, easy to audit, and trivial to route to the right workflow.

NLP methods have ranged from hand-written rules and regex (1950s–1980s), to statistical models like hidden Markov models and conditional random fields (1990s–2000s), to neural networks and transformers (2010s onward). The techniques changed. The job did not: turn messy human language into structured signal a computer can act on.

What Are LLMs?

Large language models are a specific kind of NLP model, built on the transformer architecture Google introduced in 2017, and trained on hundreds of billions of tokens of text. Instead of predicting a label, an LLM predicts the next word in a sequence, then the next, then the next, until it has written a full response.

That single change, from classifying text to generating text, is the reason LLMs feel like a leap rather than an incremental upgrade. An LLM can summarize a ticket history, draft a reply in the customer’s preferred tone, explain a billing discrepancy in plain English, or walk a user through a reset flow, all without being explicitly trained on those exact tasks. Earlier NLP systems could not.

The tradeoff is control. An NLP intent classifier either outputs refund_request or it does not. An LLM outputs prose, and that prose can be wrong. Research out of Stanford in 2024 found that ungrounded LLMs hallucinate in 15–30% of customer support responses, depending on query complexity. That is the central problem IrisAgent’s Hallucination Removal Engine exists to solve, and it is why every production AI chatbot still needs an NLP layer underneath the LLM.

NLP vs. LLMs: The Key Differences

The cleanest way to hold NLP vs. LLMs in your head is to think of NLP as the category and LLMs as one branch inside it. Every LLM is an NLP system. Not every NLP system is an LLM.Here is how the two compare on the dimensions that matter for a chatbot:

Dimension | Classic NLP | LLMs |

Primary job | Classify, extract, tag | Generate text |

Output shape | Structured labels or entities | Free-form prose |

Compute cost | Low (milliseconds, CPU) | High (hundreds of ms, GPU) |

Auditability | High; each decision is a discrete label | Low; prose is not easily diffed |

Hallucination risk | Near zero | 15–30% without grounding |

Training data needed | Thousands of examples per intent | None for in-context use; billions for pretraining |

Typical support use | Intent routing, triage, sentiment, language detection | Summarization, drafted replies, explanations |

Five differences are worth pausing on:

Compute.

An NLP intent classifier runs in under 50 ms on a laptop CPU. A frontier LLM can take 500–2000 ms on a GPU cluster. At 1,000 tickets an hour, that gap costs real money.

Auditability.

When a support leader asks “why did the bot route this ticket to billing instead of abuse?”, an NLP classifier can show its confidence scores on every intent. An LLM produces a paragraph that has to be re-prompted to explain itself.

Hallucination.

Structured NLP output cannot hallucinate a refund amount or invent a policy clause. An ungrounded LLM can and will. This is not a theoretical risk; it shows up in 15–30% of responses without guardrails.

Data hunger.

NLP classifiers need labeled examples per intent. LLMs can handle a new intent with a one-line prompt instruction, which is why LLMs feel faster to deploy until you hit the accuracy ceiling.

Scope.

NLP models are narrow by design. LLMs are generalists. Both traits are useful in different layers of the same chatbot.

How NLP Works Inside Modern AI Chatbots

A production AI chatbot is not a single model; it is a pipeline. The most reliable production architecture, and the one IrisAgent runs, is a five-stage flow where NLP handles the first, third, and fifth stages and the LLM handles the middle.

Intake and NLP pre-processing.

The incoming ticket goes through tokenization, language detection, PII redaction, and spell correction. These are classic NLP tasks. They are fast and deterministic.

NLP intent and entity classification.

A fine-tuned classifier tags the ticket with an intent (for example,

password_reset,refund_request,account_update) and extracts entities (order IDs, product names, account tiers). This structured output drives the next decision.Retrieval and grounding.

The system pulls the most relevant knowledge base articles, SOPs, and ticket history for that specific intent. Without this step, the LLM would answer from its training data, which is where hallucinations come from.

LLM response generation.

The LLM writes the response, constrained by the retrieved documents. This is retrieval-augmented generation (RAG), and it is the industry-standard way to keep LLMs grounded in your own data instead of their pretraining corpus.

NLP post-processing and validation.

A final NLP layer checks confidence, scans for hallucinated facts against the cited sources, flags low-confidence answers for human handoff, and tags the outcome for reporting.

Skip stage 2, and the chatbot has no idea which workflow the ticket belongs to, so it treats every ticket the same. Skip stage 3, and the LLM makes things up. Skip stage 5, and nobody catches when the LLM is wrong until a customer complains.This is why “we replaced NLP with an LLM” is almost never accurate for a production system. What teams usually mean is “we replaced our rule-based NLP intent classifier with a neural one, and we added an LLM for response generation.” The NLP layer is still there; it just moved under the hood.

Where NLP Still Beats LLMs (And Vice Versa)

Support leaders evaluating vendors keep running into the same question: if LLMs are so capable, why do we still need separate NLP components? The answer is that each layer has jobs the other layer does worse.NLP wins when:

The answer is a label, not a sentence.

Routing a ticket to the right queue is a classification problem. Using a 70-billion-parameter LLM for a decision that needs one of six labels is expensive overkill.

Latency matters.

Voice bots, real-time agent assist, and live chat triage all need sub-200-ms response times. Classic NLP models deliver that; most LLMs do not.

Auditability matters.

Regulated industries (healthcare, fintech, insurance) require explainable decisions. Confidence scores from an NLP classifier are easier to defend to a compliance reviewer than an LLM’s prose.

LLMs win when:

The answer is prose.

Summarizing a ten-message thread, drafting a reply that matches a customer’s tone, or walking a user through a three-step fix all require fluent generation. Classic NLP cannot do this.

The task is zero-shot.

New intents, new products, or edge cases that have no labeled training data still get a reasonable response from an LLM. Classic NLP classifiers fail on anything outside their training distribution.

The task is multi-turn reasoning.

Following a conversation across several messages, holding state, and adapting the next question based on the previous answer all play to LLM strengths.

The production pattern that works is to use each layer where it is strongest. That is the difference between NLP vs. LLMs as a philosophical debate and NLP and LLMs as a system design.

Common Mistakes When Building Chatbots With NLP + LLMs

Three mistakes come up in almost every support AI rollout. They are all expensive and all avoidable.

First, skipping the grounding layer. Teams see a demo where an LLM gives a perfect answer from its training data, ship it to production, and watch hallucinations spike once real customers ask about current pricing, recent policy changes, or account-specific data the model was never trained on. Grounding is not optional for customer support. The Zendesk 2024 CX Trends report flagged AI trust as the #1 concern for support leaders, and hallucination is the root cause.

Second, using an LLM where an NLP classifier belongs. Teams route every ticket through a general-purpose LLM because it is easier than training a dedicated intent model. Then they are surprised when GPU costs 10x and latency creeps past two seconds on live chat. The fix is to run cheap NLP classification first and only invoke the LLM when the task actually needs generation.

Third, skipping evaluation. Support leaders who would never ship an onboarding flow without a staging environment happily ship AI chatbots without a held-out test set. The result is a bot that looks great in a demo and fails in silence on production traffic. Every NLP and LLM component in the pipeline needs its own metrics: intent accuracy for the classifier, faithfulness and citation rate for the LLM, and end-to-end resolution rate for the full system.

How IrisAgent Combines NLP and LLMs

IrisAgent’s platform runs the five-stage pipeline above across live deployments at Dropbox, Zuora, and Teachmint. The NLP layer handles routing, entity extraction, and sentiment. The Hallucination Removal Engine enforces grounding and validates every LLM response against the source documents it cites. The NLP post-processing layer catches low-confidence answers before they reach the customer and hands them off to a human agent.The measurable outcomes of getting the NLP vs. LLMs mix right show up in three places:

Validated accuracy above 95%

across enterprise deployments, versus 70–85% for ungrounded LLM chatbots

50%+ of support tickets fully resolved

without a human agent, because routing, lookup, and action all happen in one pipeline

24-hour deployment

instead of 6-week custom development, because the NLP intent models and LLM prompts come preconfigured for support use cases

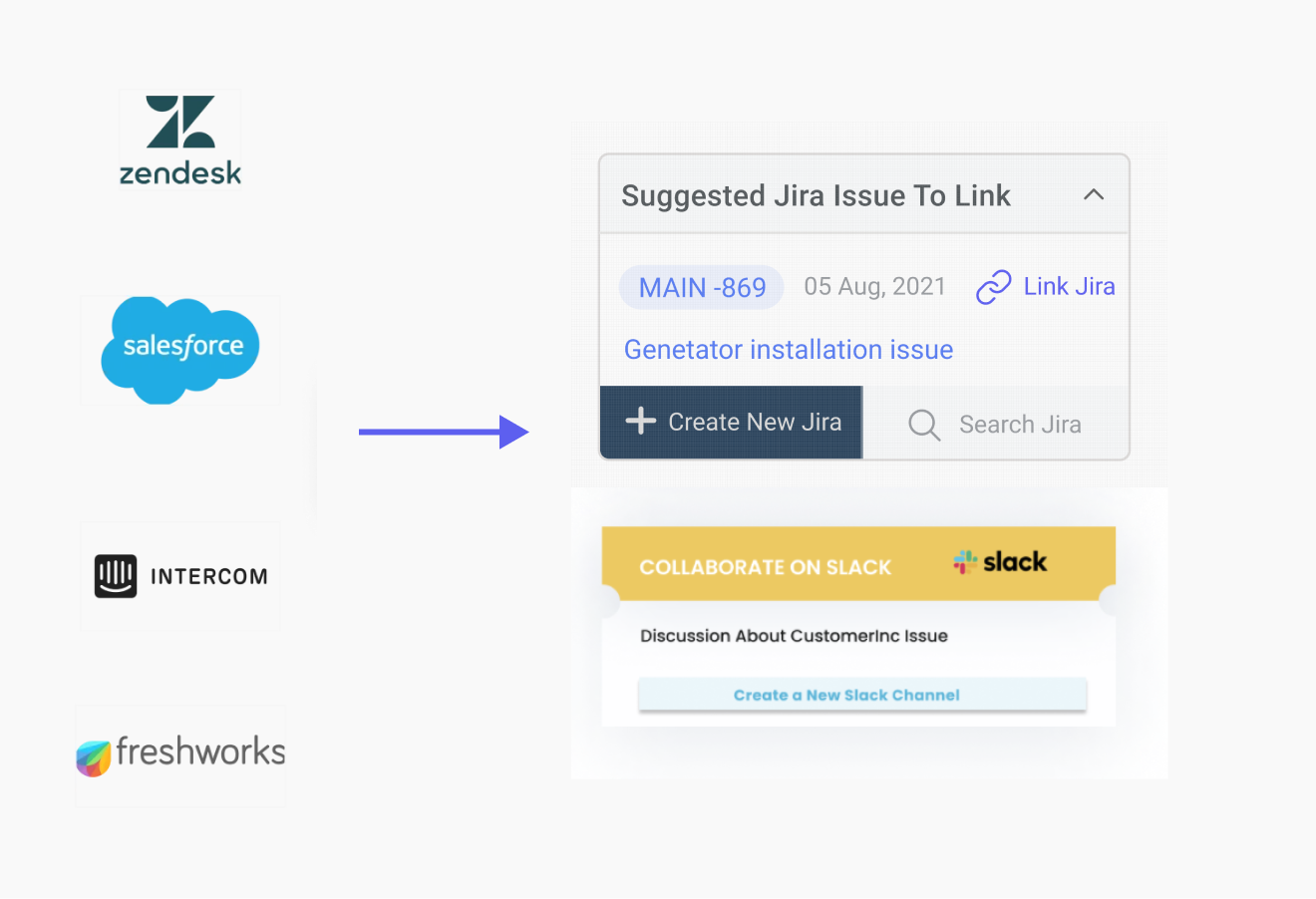

Unlike Forethought, which requires a 20,000-ticket data minimum to train its NLP classifiers, or Decagon, which runs a 6-week custom LLM build, IrisAgent ships with support-specific NLP models already trained and integrates with Zendesk, Salesforce, Intercom, and Freshdesk in a one-click install. The same system works on day one for a 10-agent team and a Fortune 500 support org.

Next Steps

The short version of NLP vs. LLMs is that it is the wrong frame. The right frame is NLP and LLMs, layered together inside a chatbot pipeline where each does the work it is best at. If you remember nothing else from this guide, remember these five things:

NLP is the category. LLMs are one branch of it.

Classic NLP classifies and extracts; LLMs generate.

Production chatbots use both, in a five-stage pipeline.

Grounding (RAG) is what keeps LLMs from hallucinating in customer support.

Validated accuracy above 95% is achievable when the NLP and LLM layers are designed together, not bolted on.

If you are evaluating an AI chatbot for your support team, ask the vendor which of the five pipeline stages they own, which they rely on a third-party model for, and what their validated accuracy is on your data (not a demo dataset). The answers to those three questions tell you more than any product page will.

Book a 20-minute IrisAgent demo to see the full NLP and LLM pipeline running on a live support queue, or read the Dropbox case study for the numbers on how 160,000 agent minutes were saved with the same architecture.

Frequently Asked Questions

What is the difference between NLP and LLMs?

NLP is the broader field of teaching machines to understand human language. LLMs are a specific kind of NLP model, built on transformers and trained on massive text datasets, that specialize in generating text. Every LLM is an NLP system, but not every NLP system is an LLM. Classic NLP models classify and extract; LLMs generate. Most production chatbots use both.

Do LLMs replace NLP?

No. LLMs replace certain older NLP techniques, like hand-written rules for intent classification, but they do not replace the broader NLP stack. Production chatbots still need NLP for pre-processing, intent routing, entity extraction, and post-response validation. LLMs sit in the middle of that stack, not on top of it. When a vendor says they replaced NLP with an LLM, they usually mean they upgraded one stage of their NLP pipeline.

How do AI chatbots use NLP?

AI chatbots use NLP to pre-process incoming messages (tokenization, language detection, PII redaction), classify the user's intent, extract entities like order IDs or product names, retrieve the right knowledge base content, and validate the final response. In a five-stage chatbot pipeline, NLP handles stages 1, 2, and 5. The LLM handles stages 3 and 4, which are retrieval and response generation.

Is ChatGPT an NLP system?

Yes. ChatGPT is an LLM, which is one type of NLP system. It was trained on a large corpus of text using NLP techniques (tokenization, transformer attention, instruction tuning) and performs NLP tasks (text generation, summarization, translation). When people ask NLP vs. ChatGPT, they usually mean classic NLP classifiers vs. LLMs like ChatGPT, which is a more specific question than NLP vs. LLMs writ large.

Why do production AI chatbots still need NLP if they have an LLM?

Three reasons: cost, latency, and reliability. NLP classifiers run in under 50ms on CPU and return structured, auditable labels. LLMs take 500-2000ms on GPU and return prose that can hallucinate. A well-designed chatbot uses NLP for the cheap, high-volume classification work and reserves the LLM for the generation tasks that actually need fluent text output. Running every ticket through an LLM alone is expensive, slow, and risky.

How does IrisAgent prevent LLM hallucinations?

IrisAgent's Hallucination Removal Engine grounds every LLM response in the customer's own knowledge base, SOPs, and ticket history using retrieval-augmented generation, then validates the generated answer against the cited sources before sending. Responses that fail validation or fall below a confidence threshold are handed off to a human agent. Validated accuracy stays above 95% across enterprise deployments, compared to 15-30% hallucination rates reported for ungrounded LLMs.